Large Language Models (LLMs) like GPT, Claude, and LLaMA are powerful at generating human-like responses, but they are only as good as the context they have access to. Without grounding in relevant, timely, or domain-specific knowledge, they can hallucinate, produce outdated information, or simply miss the mark in solving real-world problems.

This is why context is king in AI applications. Two of the most important approaches to extending LLMs with real-world knowledge and capabilities are:

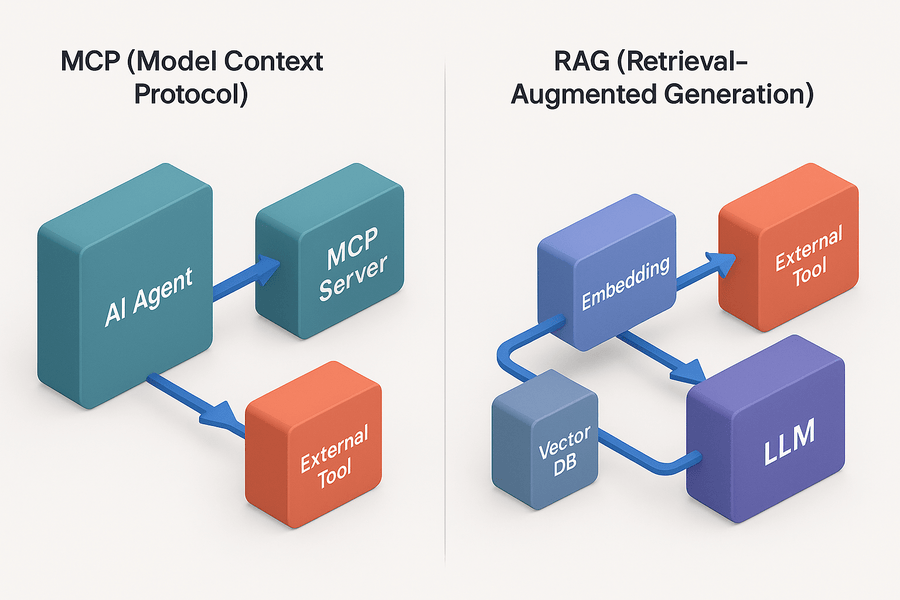

- MCP (Model Context Protocol) – a protocol-driven way for LLMs and AI agents to connect with external tools, APIs, and services.

- RAG (Retrieval-Augmented Generation) – a technique to improve LLM intelligence by retrieving relevant information from external data sources (via embeddings and vector databases).

While both solve the context challenge, they do so in very different ways. Let’s explore each in detail.

What is MCP? (Model Context Protocol)

MCP is a standardized protocol that allows AI agents to connect to external tools, APIs, or services in a structured, secure, and scalable manner. Instead of hardcoding integrations for each service, MCP provides a common language that agents can use to discover, connect, and interact with tools.

MCP (Model Context Protocol) is designed to provide a standardized, secure, and scalable way for AI agents to interact with external tools and services. At their core, MCP servers act as gateways that expose tools such as databases, APIs, and cloud services as MCP-compatible endpoints, ensuring they can be accessed uniformly. On the other side, MCP clients, typically AI agents or assistants, connect to these servers to discover available tools and query their capabilities.

This is made seamless through standardization, as all MCP servers follow a consistent schema and interface, allowing agents to integrate with multiple services without needing custom implementations for each. Additionally, MCP emphasizes secure access, enabling authentication, permission management, and detailed logging of tool usage, which ensures that agents operate within defined boundaries while maintaining traceability and compliance. Together, these components create a flexible framework that empowers AI systems to perform real-world actions safely and at scale.

Real-World Use Cases of MCP

- Chatbots with tool use: Connecting to calendars, CRMs, or Slack via MCP servers.

- AI Assistants with automation: Triggering cloud deployments or database queries from text/voice prompts.

- Customer Support Agents: Accessing ticketing systems (Zendesk, Jira) through MCP endpoints.

- Financial Assistants: Running API calls to fetch stock prices, portfolio balances, or transaction history.

- DevOps AI Agents: Performing actions in Kubernetes clusters, Terraform, or AWS via MCP tool connectors.

In essence, MCP transforms an LLM into an action-oriented agent, capable not only to talk but also to act.

What is RAG? (Retrieval-Augmented Generation)

RAG focuses on knowledge retrieval. Instead of expecting the LLM to know everything within its pre-training cutoff, RAG pipelines supply fresh, domain-specific context retrieved from external data sources like documents, wikis, or databases.

RAG (Retrieval-Augmented Generation) revolves around enriching LLMs with external, domain-specific knowledge to improve accuracy and relevance. The process begins with embedding creation, where text documents are converted into high-dimensional vector representations using models such as OpenAI’s text-embedding-ada or Cohere’s embedding models. These vectors capture semantic meaning and are stored in a vector database like Pinecone, Weaviate, Milvus, or FAISS, which is optimized for similarity search at scale.

During inference, a retrieval step is performed: the user’s query is also embedded and compared against the database to identify the most contextually relevant chunks of information. Finally, these retrieved chunks are integrated into the LLM’s input through augmented prompting, effectively grounding the model’s responses in factual, up-to-date, and domain-specific knowledge. This pipeline ensures that the LLM does not rely solely on its pretraining but instead dynamically accesses the most relevant information to deliver more accurate, context-aware answers.

Real-World Use Cases of RAG

- Enterprise Knowledge Assistants: Answering questions from company policies, manuals, or HR docs.

- Legal and Compliance AI: Searching laws, contracts, or regulations to provide evidence-backed summaries.

- Healthcare AI: Pulling medical research, patient records, or treatment guidelines.

- E-commerce Chatbots: Surfacing product catalogs, inventory levels, or FAQs.

- Research Assistants: Exploring scientific literature, embeddings of academic papers, or industry reports.

With RAG, the LLM doesn’t need to memorize everything—it simply retrieves the right information at the right time.

MCP vs RAG: Key Differences

| Feature | MCP (Model Context Protocol) | RAG (Retrieval-Augmented Generation) |

|---|---|---|

| Purpose | Connects LLMs to external tools & APIs | Provides factual grounding from external data |

| Context Type | Action context – enables execution (do something) | Knowledge context – provides information (know something) |

| Architecture | Agent ↔ MCP Server ↔ External Tool | User Query ↔ Embedding ↔ Vector DB ↔ LLM |

| Real-time | Yes (live API calls, automation, transactions) | Mostly retrieval from pre-embedded knowledge |

| Best For | Agents that need to take actions | Assistants that need accurate, up-to-date info |

| Limitations | Requires tools/APIs to be MCP-compliant | Relies on embedding quality & data freshness |

Which Should You Use?

- Use MCP when…

- Your AI agent needs to perform actions, not just provide answers.

- You want a scalable way to integrate multiple tools (calendars, databases, APIs).

- You are building multi-modal assistants (voice + actions).

- Use RAG when…

- Your AI system must be knowledge-rich and context-aware.

- You’re building search-augmented chatbots or assistants.

- You need factual accuracy over memorization (e.g., legal, medical, enterprise knowledge).

- Use Both Together when…

- You want an AI agent that can retrieve knowledge (RAG) and then take action (MCP).

- Example: A support bot retrieves troubleshooting guides (RAG), then opens a Jira ticket or resets a user password (MCP).

Conclusion

MCP and RAG are not competitors; they are complementary approaches to extending LLMs with context.

- RAG enhances what the model knows by grounding it in external data.

- MCP enhances what the model can do by giving it access to tools and APIs.

In practice, the most powerful AI agents combine both. They can retrieve the right knowledge when asked a question and take the right action when instructed to do something. As AI engineers, the key is to design context-aware pipelines that use RAG for information retrieval and MCP for structured action execution. Together, they move us closer to building truly intelligent, helpful, and reliable AI agents.